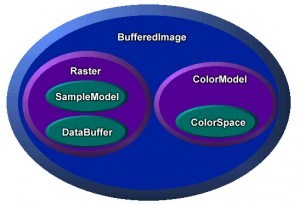

Recently I have been working on some code that makes some heavy use of BufferedImages. I have found some oddities whilst working with BufferedImages that you may or may not already know. In the spirit of educating others I thought I would mention what I have found to hopefully help you avoid confusion.

1. Sub Images are not what you think.

So you have a large image and you want to get a small section of that image, convert it to a byte array then do something with that array. So lets use BufferedImage.getSubimage(int x, int y, int w, int h) then get the data from the returned BufferedImage. Easy. Nope.

Turns out when you check the documentation you will find the following line that I over looked. “The returned BufferedImage shares the same data array as the original image”. When I tried the above I kept getting the entire image data, when using instead of the data for the smaller sub image I wanted as the sub image actually contained the entire image data from the first larger image.

Now there are many ways to get around this issue but as the BufferedImage is manipulated and that passed to other methods that get the byte[] of the image I can’t just specify the area of the Raster required, the area is unknown by this point. So what can be done is create a new bufferedImage at the correct size and draw the subimage to the new BufferedImage. Nice and easy, the image passed on only contains the required data.

2. The byte data of the image might actually be packed correctly.

So I have a section of the image and want to get the byte data from it. This was done using BufferedImage.getRaster().getDataBuffer().getData(). The problem I had here was expecting and assuming the data is was organised a certain way.

The image is an indexed gray scale image containing 16 colors defined with 4bits. Here I thought that each byte would describe two pixels (2x4bit per byte) but apparently not, the 4 least significant bits (lsb) are used to represent the pixel color, the 4 most significant bits (msb) are not used. This is not very useful considering I need the data so that each byte will contain 2 pixels.

The solutions, run through the byte array and merge values together, so the first value occupies the 4 msb and the second value would occupy the 4 lsb, then move to a new byte value. Rinse and repeat till done. Should the final value occupy the 4 msb that fill the last 4 msb with zeros.

Our software libraries allow you to

| Convert PDF to HTML in Java |

| Convert PDF Forms to HTML5 in Java |

| Convert PDF Documents to an image in Java |

| Work with PDF Documents in Java |

| Read and Write AVIF, HEIC, WEBP and other image formats |